Part one of this article discussed facade design and environmental analysis. In the second half, we focus on system rationalization and conceptual cost estimating. A more detailed description of real-time scheduling, tagging, and rationalization using Grasshopper for Rhino is also covered.

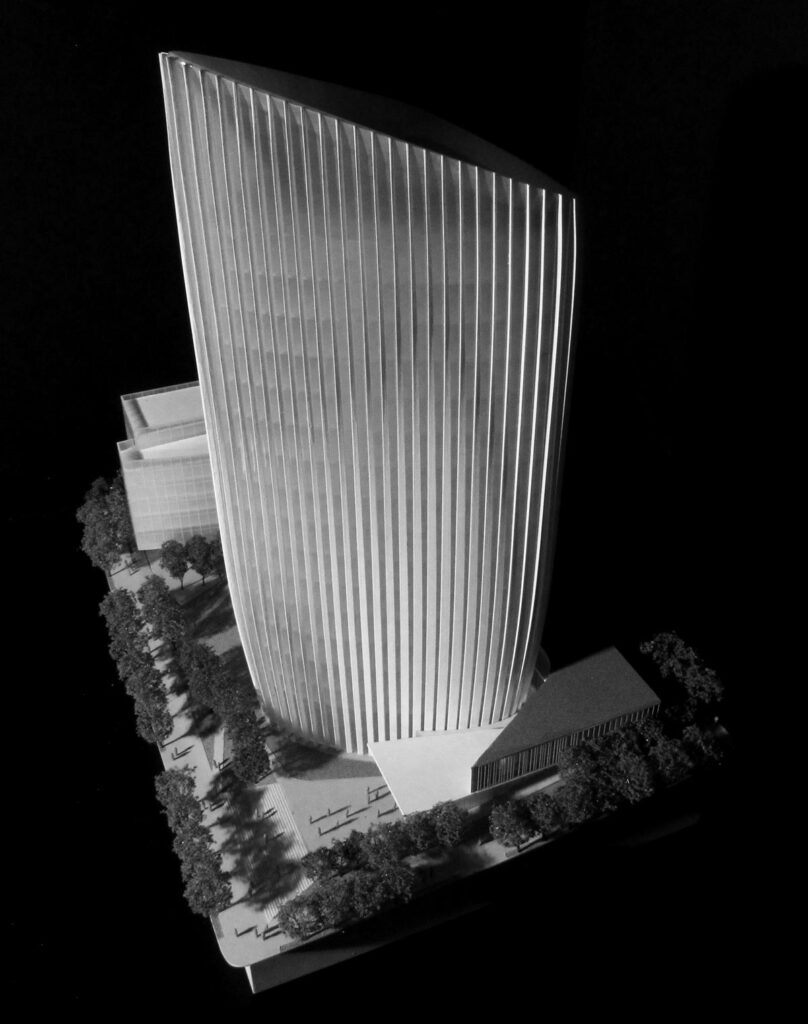

As mentioned in part one, the design-build competition format presented us with a unique challenge. While design-build is often described as a partnership between an integrated design and construction team, the reality is that builders remain the principle contract holders while architects serve as their design consultants. In a competition for a design-build project, contractors are typically required to submit a design proposal along with a guaranteed maximum price, usually within a specified range. They are tasked with managing the design team so that they are not left with a commitment to construct too much building for too little fee. As a result, designers must provide cost estimators with enough information about their concepts to allow the estimators to assign realistic costs. This is critical when dealing with unique or potentially complex design elements, because if they are not well described, they can fall victim to large safety factors and priced out of the project. In the case of the LAFCT competition, the sculpted geometry of the tower and high level of panel variation on the façade immediately attracted the attention of the cost estimating team. To avoid the large markups associated with unknowns in the design, the Yazdani Studio worked closely with a large group of consultants, fabricators and installers in order to develop the design to a high enough level of detail to produce reliable but aggressive pricing. Hensel Phelps, the builder on the project, proved to be an excellent partner and attracted a team of top level consultants and subs to aid us in this effort, including Weidlinger Associates (structural), Arup (facade engineering) and Enclos (curtain wall contractor).

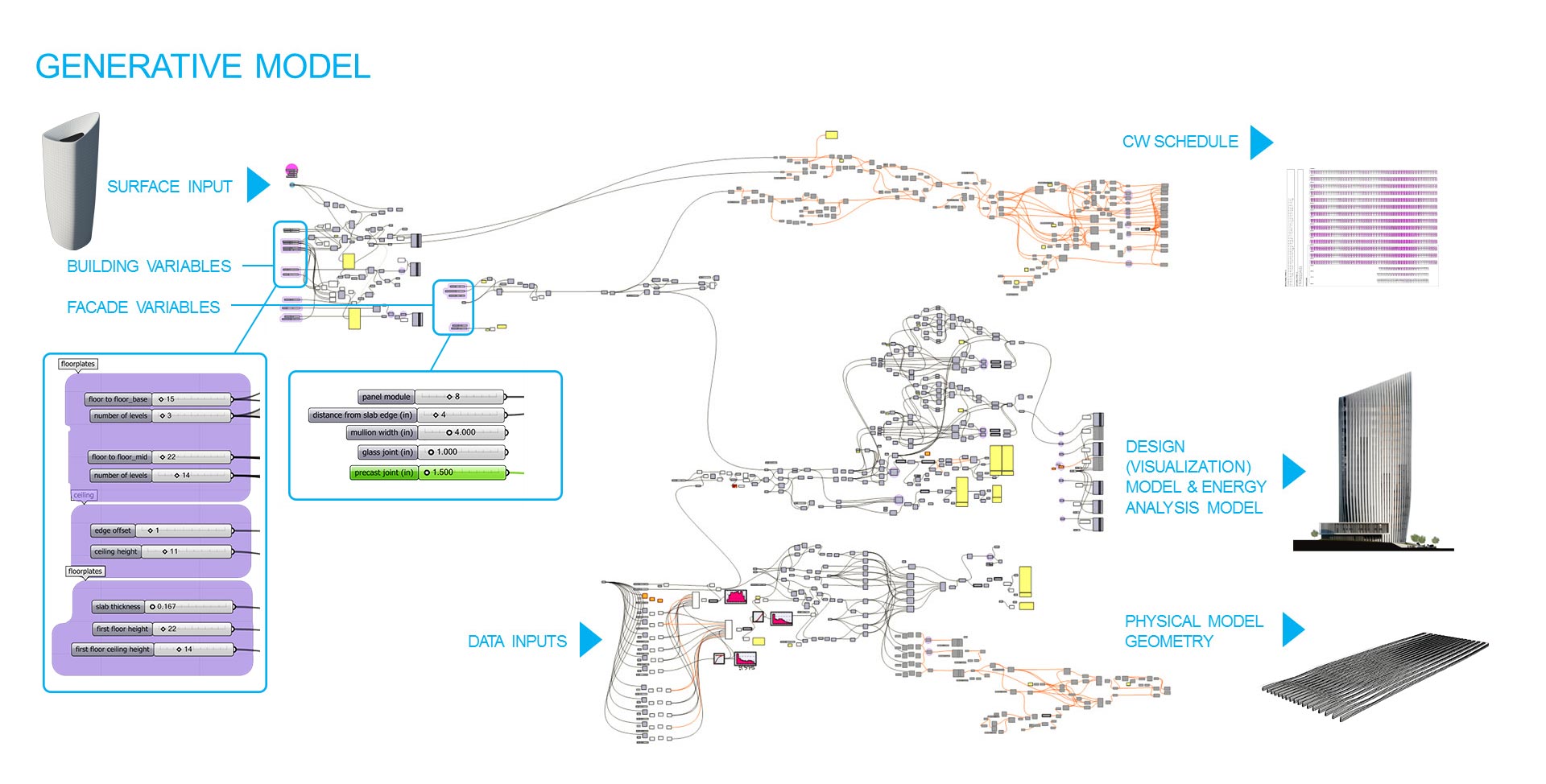

With all of this expertise at our disposal, our biggest challenge was to deliver the continuously changing design information to all of the many outside team members in a format that could be readily used by them. This goes much deeper than sharing files with Dropbox. Anyone who has gotten their hands dirty with sharing BIM or 3d design information knows that each consultant on a team requires a different file format. A BIM model cannot be easily 3d printed nor is it the appropriate level of detail for energy analysis. A cost estimator needs tabular area take-offs, while a model maker requires a water-tight NURBS based 3d model so they can either unroll or print geometry. To help the team deal with the high level of coordination necessary, we began to tailor our workflow around a generative model that could automatically produce the specific formats needed by each consultant. By delivering design information in immediately usable formats and saving the time normally lost in the process of translation, we were able to accelerate turn-around and develop the design in greater detail.

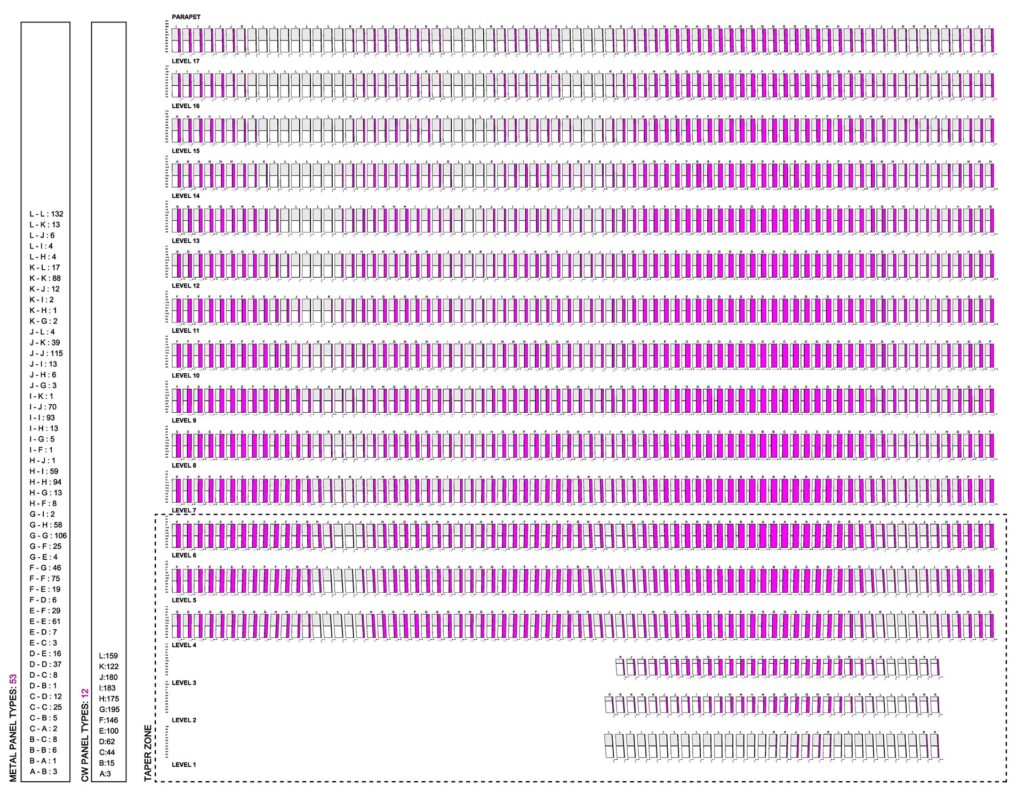

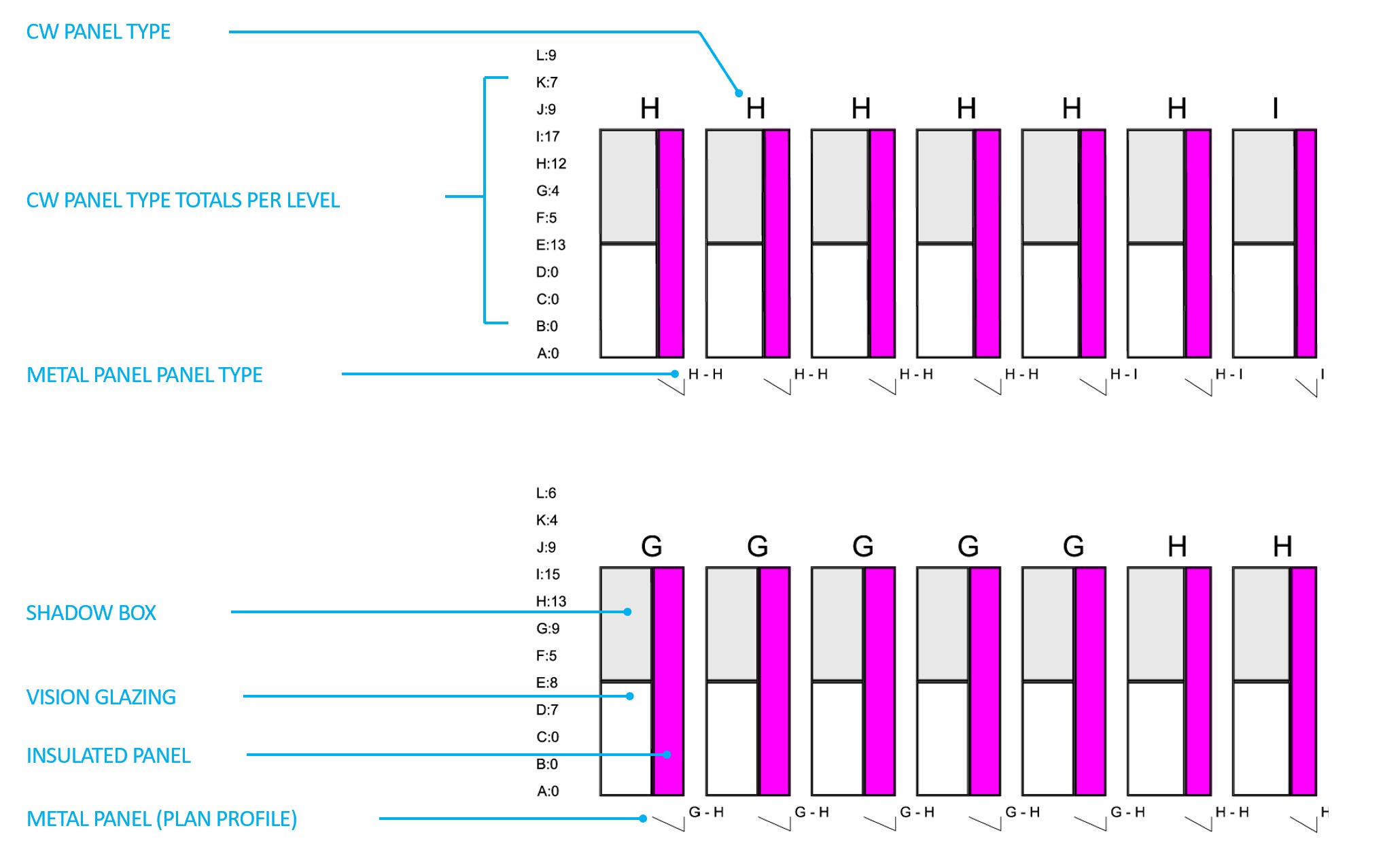

To enable this level of communication, we spoke directly with technical staff to understand how each office uses digital information. We discovered that Enclos, the curtain wall contractor, employs a dedicated team of modelers to rebuild 3d geometry in Revit using a custom curtain panel family that can produce tabular data. Their technical and cost estimating staff then use this information to gauge quantities and complexity of assembly. While this workflow would work beautifully under normal circumstances when a developed BIM model is usually available, it is not practical during a fast-paced competition design effort. Rebuilding a facade with 100+ unique panel configurations in Revit would result in a prohibitive two week lag between design and costing. To remedy the problem, we added components to the Grasshopper definition that could continually generate a panel schedule as the skin was adjusted. This panel schedule (shown below) provided a graphic representation of each unit laid out flat as it would be assembled.

More importantly, the drawing quantified the complexity of our design by tagging unique components and providing quantities for each type. The information gave the Enclos team a better understanding of the design and the confidence to assign more accurate costing information with tighter margins for contingency. We employed a similar strategy with the model maker (The Model Shop), the rendering team (Kilograph), and the energy model. This level of integration allowed us to continue to tweak the design in tandem with the ongoing production effort.

THE WORKFLOW

Grasshopper for Rhino served as a central hub for generating the multiple outputs required by various consultants. As outlined in the “generative model” graphic above, the Grasshopper definition was derived from a surface that was sculpted in 3DSMAX using subdivision surface (subD) modeling. This surface was then imported into Rhino via the obj file format so that the quadrangle mesh could be preserved. T-Splines was used to convert the mesh into a NURBS surface that was referenced by the Grasshopper definition. From here, our definition would build the tower using parameters set in the available sliders enlarged above. Areas of density on the facade and fin depths were controlled with a series of 3d curves surrounding the digital model in 3d space. By adjusting the distance between these “control curves” and the facade, the team could fine tune each zone to a desired degree of openness and solar exposure.

Outputs for each discipline were generated automatically. For rendering, mesh surfaces were baked out of the grasshopper definition and exported as .dwg files. These files were linked into 3DSMAX where surfaces were thickened, and materials and texture mapping applied. By linking rather than importing, we were able to update the model in 3DSMAX as the design progressed without having to reassign materials.

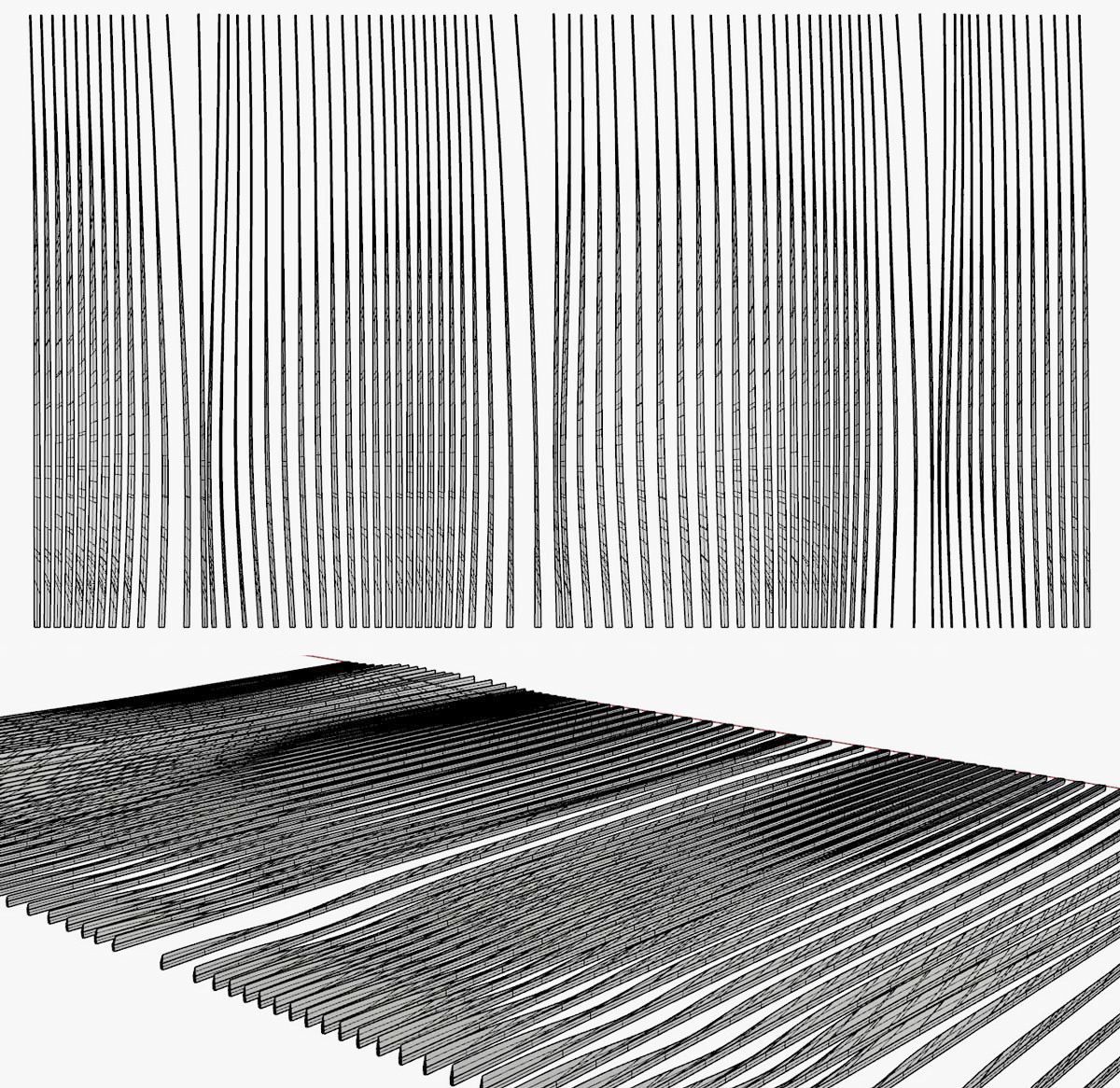

The construction of the physical model required a unique output where the 3d fins were rebuilt from unrolled surfaces.

The guys at The Model Shop were able to mill these fins down to under 1/32″ thick with a sharp tapered edge. These fins were embedded into slots inside of a 3d printed resin “husk”.

The curtain wall panel schedule mentioned earlier was developed by feeding design parameters through a set of components that draw and tag each panel. Our facade system is designed in two parts:

1. a unitized curtain panel made up of solid insulating material and glass.

2. a metal fin.

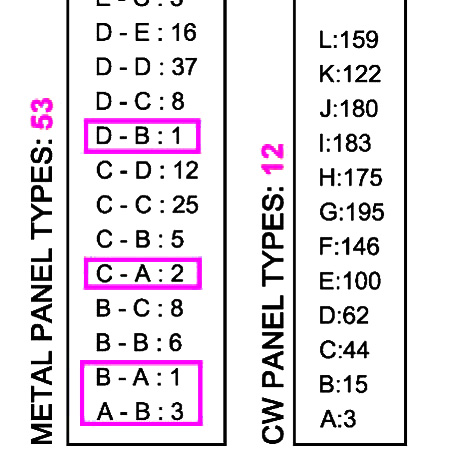

Panel tags were achieved by using the string function in Grasshopper. Each unique value for the curtain panel glass width was defined by a letter code. Letter codes where then counted row by row to produce total panel counts. The fins are slightly more complex as they are defined by two variables: top depth and bottom depth. Again, each depth was assigned a letter code and codes where paired to define fin type. Counts were generated in the same way as the panels. We used the union function in Grasshopper on the fin type list to create a list of only unique codes.

This allowed us to determine the total amounts of unique fin configurations that exist in the system and lists counts of each. Looking at the list, we could clearly see that there are several types that only occur sporadically. These could easily be adjusted to a more common neighboring size to further simplify the system.

CONCLUSION

The LAFCT competition is a good example of how newly available software and technology can transform our process allowing us to push progressive and even experimental design into realms where time, risk, and accepted construction norms usually dominate decision making. By continually collecting data and sharing it with the team in readily serviceable and easily refreshed formats, we were able to quantify the complexity of our design in ways that previously could only be achieved at the end of documentation. We could also shift some of the time normally lost in the process of digital file sharing to design development and environmental analysis.

The ability to leverage this amount of specific information about a design as it develops in the early conceptual phase is still relatively new to the Architecture industry, but its implications in terms of negating issues of cost and construction complexity when it comes to pushing progressive design are substantial. While typically we are forced to rely on “rules of thumb” and estimation, these do not bring with them the same level of assurance that can be delivered by hard data. In addition, the time traditionally required for estimating during conceptual design doesn’t allow building systems and elements to be cemented and refined in a way where synergistic effects are anything other than superficial or theoretical. In the case of the LACT competition, the information harvested from the model gave Enclos the confidence to price the facade system within the demanding cost margin required by Hansel Phelps. Without it, we may have over-simplified the system to only few panels, sacrificing the subtlety of the soft transitions that distinguish the design.